SolAR

Spatial Audio AR Synth Experience (December 2018)

SolAR, in essence, is an exploration into a possible use case for mixed reality technology that shies away from the norm of visually augmented gaming and utility applications. I developed this application because I believe that it has the potential to scale into multiple settings, including networked interaction and installation models. With modern advancements in AR technology, i.e, the release of Apple’s ARKit 2.0 - it makes it more accessible for developers to create complex augmented and mixed reality applications.

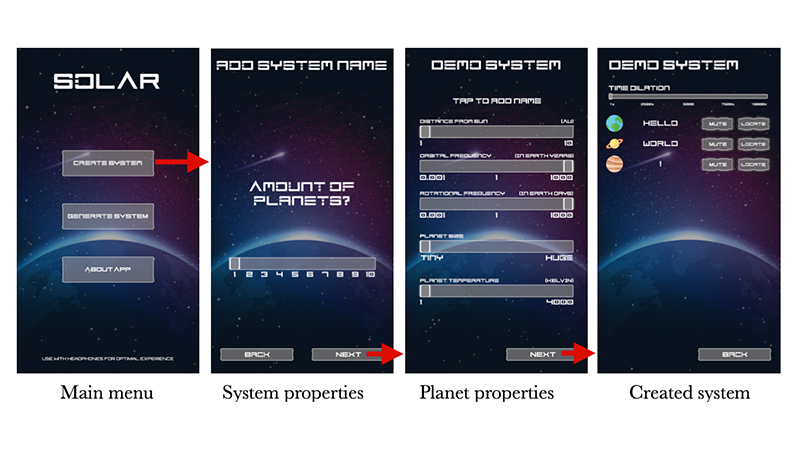

In its current form, SolAR is a spatial audio experience where the user is the centre of a randomly generated or user-parametrised solar system. Through data sonification, celestial bodies orbiting the centre of the system are heard orbiting the user at variable paces. Their audio source is a real-time synthesiser that creates a random sequence based on a predetermined scale. Parameters of the synthesiser are set at run time based on the respective physical properties of the individual celestial objects. Rotation of the iPhone leads to the rotation of the Unity 3D World.

My primary motivations for the developing of this software is my fascination of the cosmos, the development of modern AR technology, and my interest in aurally perceiving information and object properties through data sonification.